Account deletion @ bkmrkd.it

I’ve been struggling with the account deletion feature at bkmrkd.it. Specifically if users have large amounts of bookmarks. The Lambda proces time limit make it a bit trickier than just a normal program. You have to limit the amount of CPU per call. So the simple method first tried was to paginate (like browsing through your bookmarks) thourg the list of bookmarks and deleting them in batches using SQS for triggering each batch. This triggered another “hidden” feature I didn’t know about while testing this: Recursive loop detection and auto remediation.

At first I thought I missed something in my code as the deletion process didn’t finish as expected but stopped halfway. It all became clear the next day when I received an email notification from AWS:

Hello,

AWS Lambda has detected that one or more Lambda functions in your AWS Account: ########## are being invoked in a recursive loop with other AWS resources. To prevent unexpected charges from being billed to your AWS account, Lambda has stopped the recursive invocations listed in the ‘Affected resources’ tab of your AWS Health Dashboard.

This scared me at first but my trusty AI companion walked me though some of the options. The difficulty being that unlike relational databases there is no possibility do a “DELETE FROM TABLE WHERE COLUM = X”, you have to do a scan or query and then iterate trough the result set which is in itself timeconsuming on large datasets. As I stored 2 records for each bookmark, one for quick lookup and finding and one with all the details this made it increasingly difficult. So I did a rethink on the datamodel, which resulted in a single record per bookmark.

The records now all have the same PK which makes deleting everything from the same account easier. Another design choice was to move the bulk deletion out of Lambda and proces it locally. I now only delete the account details directly and leave the bulk deletion for a later moment outside of Lamdba.

By the way, the export functionality was quite the opposite and easy without significant problems. It is basically the same idea because of the time limit. I chunk through all the bookmarks, outside the main program and build a set of JSON files which I zip together at the end and send an email with a download link. Works like a charm.

Fred again.. & Thomas Bangalter

First thing I thought when reading about this was: Tomas who? Then when reading and listening I recognised it, Thomas Bangalter was one of the illustrious duo Daft Punk. I didn’t recognize him witouth the helmet. This video has been on repeat for several days now, it’s a banger. Fred again.. with Daft Punk doing a 2 hour long DJ set. What can I say, it’s epic. Listen and enjoy!

Restarting bkmrkd.it

It is finally in a state that is usable and presentable, I’ve been working on and off in my spare time since the end of summer last year. I wanted to learn and experience the benefits of using a serverless platform. The simplest way to start that journey was with Chalice, a ready made framework to develop for AWS Lambda.

The project that I’ve wanted to reboot was my bookmarking website bkmrkd.it which I developed some time ago using the PHP Laravel framework but never quite finished it. It was usable for me but I never completed user registration and other features that were still on the todo list.

The current release is working, but not completely finished or polished. All the information is there and the basics work, you can register and add, edit and delete bookmarks with tags. It is even possible to import bookmark files from your browser to give you a head start. There might be some minor bugs, I’m still testing and debugging but it is good enough to release it publicly.

Still to do:

- export functionality (will time out because of the time limit for lambda functions, need to implement a background process for this)

- deleting user accounts (same problem, time limit if you have a large amount of bookmarks)

Dropping Multipass for OrbStack

I’ve had it with Multipass, again after upgrading to a newer version of OSX (Tahoe in this instance) Multipass isn’t starting up again with the dreaded “can’t connect to socket” message. I could not resolve it, even restarting daemon and re-installing didn’t resolve the issue. Whilst next to it I had OrbStack running with an X86 ubuntu VM and a local-stack docker image for my Chalice project which was still running as expected.

This made me rethink Multipass, after some thinking and a small experiment I decided to drop Multipass completely and switch all my VM projects over to OrbStack, which was easier then expected as it also supports cloud-init which I was already using for creating VM’s. The only change was needed to my setup scripts in ‘bash’ as the command line for OrbStack is different.

The command line for OrbStack has some peculiarities which I will document here as I had to discover them by trial and error and digging through forums, etc…

Orb will create a user in your VM which is identical to your Mac username. So to use the regular ubuntu user as you main user you have to specify this when you create your VM.

orb create -a $ARCG -c $CLOUDINIT.yaml -u $USERNAME ubuntu:$VERSION $VMNAME

where

- $ARCH is either arm64 (for native Mx) or amd64 (for X86 compatible VM’s)

- $CLOUDINIT is your cloudinit configfile

- $USERNAME is the primary username to be installed on this VM, likely

ubuntu - $VERSION is the version of Ubunu to install but is optional, if left out the latest version will be installed

- $VMNAME is the name of your VM, will be accessible as $VMNAME.orb.local on your network.

It is not possible to set the amount of CPU, Memory or disk space per VN. You can only set the maximum amount of memory and cpu for the complete OrbStack environment.

Copying files to and from the VM is simple, using orb push or orb pull commands:

orb push -m $VMNAME source destination

Executing commands is simple but has some intricacies as where they are executed is not always clearcut, especially if your command is longer and uses piping for instance. You might end up piping the output of a command on your VM to a file on your Mac. For instance: orb -m $VMNAME sudo service mysql restart is pretty straightforward but:

orb -m $VMNAME mysql -uroot -psecret dbname < /home/ubuntu/projects/outfile.sql'

will let you know that the file isn’t found. To solve this you have to use quotes and the -s option

orb -s -m $VMNAME 'mysql -uroot -psecret dbname < /home/ubuntu/projects/outfile.sql'

Something I havent solved yet is that using cloud-init to set hostname or FQDN does not work yet.

I’m abandoning my multipasssetup project as I won’t be using it anymore. I will create something similar for OrbStack.

Embrace boredom

This video really hit me, which I noticed via Kottke. I really try to take time out to be bored but there was no real drive or meaning behind it. For instance I only work 4 days of the week, because I need time to unwind but also need time to process and order everything I’ve encountered.

Arthur Brooks really brings his point across with some good examples but also has some excellent suggestions on how to get and stay bored!

Chalice development and deployments

I’ve been playing with AWS Chalice and rebuilding a bookmarking web app I had earlier witten in PHP Laravel. I wanted to learn serverless development and this looked liked the simplest route to get started using a known language Python. I also thrown in some LLM support, by using the free version of ChatGPT, as an assistent in learning the new environment.

Developing and running it locally was easy, there is a docker image available for having your own DynamoDB for a backend database and a simple install of Chalice. The hard part was when development was finished to get it running on the actual AWS infrastructure. It all looked fairly easy but then the errors start appearing. I’m documenting my findings here so others will find the working solution easier then I did.

First error I ran into is that because I develop on an M1 Mac, everything was based on the ARM architecture, and there is no feature yet in Chalice to deploy to the ARM AWS infra. It just defaults everything to the X86 infrasturcure. There is a feature request to handle this but it looks like development on Chalice has slowed down. Quick thinking was that my Mac supports X86 with Rosetta2 and I would just need to spin up an X86 Ubuntu VM to build the Chalice package. Next issue, Multipass does not support this yet. Luckily I found OrbStack where the architecture of your VM is selectable, downside is you can’t set the amount of CPU or memory per VM. It does support cloud-init, just like Multipass so my setup scripts are re-usable.

Second error, permissioning. Chalice, by default, creates the roles it needs to execute the lambda functions. However it leaves out the access rights for the DynamoDB table I needed. ChatGPT went off the rails here by proposing several different suggestions, which followed after I responded that the solution didn’t work. First I had to include a policy.json in the root of the project, next solution was to include the policy in the config.json which also didn’t work. A classic search showed me this and this article which showed me how it is done. First in your config.json add "autogen_policy": false in the required stage and then create a policy-stage.json with the details in the .chalice directory. Later I found the official documentation which described the same solution.

A related problem was using different AWS Access Keys for each stage of development, so using different identities for dev, acceptance and production. Again ChatGPT gave different answers depending on the question but eventually found a solution that worked. You store your credentials in ~/.aws where you have two files: config for your region and credentials for your acces and secret key combo’s. For the credentials it is straightforward just use [stage] to define the applicable stage but for config it is different and you should use [profile stage]. See the following example for credentials:

[default]

aws_access_key_id = yourkey

aws_secret_access_key = yoursecret

[acceptance]

aws_access_key_id = yourkey

aws_secret_access_key = yoursecret

and this for config:

[default]

region = eu-west-1

[profile acceptance]

region = eu-west-1

Third and last error, packaging static files. As I’m using jinja templates to create the webpages in Python, they need to be included in the packaging for deployment. Again ChatGPT gave non-working solutions and the web had no definite answer as well. Some indicate using the vendor directory where you can store specific non standard python packages, other specify using the chalicelib directory .

The official documentation is not quite clear on the route. I’m currently using the vendor route but will try the chalicelib option as well.

Privacy and independence

A blog post by Lukas Mathis has been sitting open in my browser tab for almost two weeks now. Every time I see it, it reinforces something I’ve believed for years: our growing dependence on big tech services comes with hidden costs that many of us don’t fully consider. Lukas’s post strikes a chord with me on multiple levels, and I think it’s worth exploring why digital independence matters more than ever.

The most obvious concern is privacy. When you upload your photos to Google Photos, your documents to Google Drive, or your files to any cloud service, you’re essentially handing over control of your personal data. Sure, these companies have privacy policies, but those policies can change. And even with the best intentions, your data becomes subject to their business models, government requests, and potential security breaches. Lukas uses photos as his primary example, but the issue extends far beyond image storage. Your emails, documents, notes, contacts, calendars—essentially your entire digital life—can end up in the hands of companies whose primary allegiance is to their shareholders, not to you.

The second issue that resonates with me is dependency. Cloud services can disappear overnight. Companies can change their terms of service, increase prices dramatically, or simply decide you’re no longer welcome on their platform—all without meaningful recourse for users. I’ve seen this happen repeatedly. Google alone has a graveyard of discontinued services that once hosted people’s important data. When you’re dependent on a single provider, you’re essentially betting your digital life on their continued goodwill and business success.

This is why I’ve made deliberate choices to maintain my digital independence, even when it means sacrificing some convenience.

I gravitate toward Apple’s ecosystem not just for the user experience, but because their business model doesn’t depend on harvesting my personal data. I also deliberately limit my social media usage—partly for mental health reasons, but also to reduce my digital footprint. For photos, I’ve skipped iCloud Photos entirely. Instead, I store my photo library on a local NAS (Network Attached Storage) device, which I then back up to the cloud using strong encryption. This gives me the best of both worlds: local control over my data with the safety net of remote backup.

One of my longest-running projects has been hosting my own email server—something I started doing over 20 years ago. Back then, it meant manually compiling and configuring everything from scratch, spending countless hours debugging mail server configurations and fighting spam filters. Today, I use Mail-in-a-Box, which has transformed email self-hosting from a masochistic technical exercise into something remarkably straightforward. The software handles all the complex configuration automatically while still giving me complete control over my email infrastructure.

The beauty of this approach extends beyond just privacy. Because I control the entire stack, I can host it with any VPS provider I choose. If I’m unhappy with my current host’s service, pricing, or policies, I can simply spin up a new server elsewhere and restore from backup. This portability is freedom in the truest sense.

If you’re interested in taking back control of your digital life, you’re not alone. The self-hosting community has grown tremendously, and the barrier to entry has never been lower. Lukas mentions the awesome-selfhosted GitHub repository, which is an incredible resource. This curated list contains hundreds of open-source applications that can replace virtually any cloud service you’re currently using. Whether you need file storage, media streaming, password management, or collaborative tools, there’s likely a self-hosted solution available.

I won’t pretend that digital independence comes without costs. Self-hosting requires time, some technical knowledge, and ongoing maintenance. You become responsible for updates, security, and troubleshooting. The convenience of “it just works” that comes with major cloud services is genuinely valuable. But for me, the trade-offs are worth it. The peace of mind that comes from knowing I control my data, that I’m not subject to arbitrary policy changes, and that I can switch providers without losing years of digital history—that’s invaluable.

Some small updates

Last weekend I updated my Mail-in-aB-ox server because my old server was still running Ubuntu 18 and the current version requires Ubuntu 22.04. I was several versions behind because I dreaded the upgrade process. In the end it almost went smoothly, I had some struggles with restoring my data but that was caused by my inexperience. I’m stil in awe of how simple it has been made.

By re-installing my box I also had to go through my own instructions of running AWStats for the static websites that are hosted on the mailserver. I noticed som small inconsistencies and made some steps a bit clearer on what to do.

The other change I made is the addition of another configuration file in my multipass setup scripts. I’ve been playing with AWS Chalice. It’s a framework on top of AWS Lambda functions and DynamoDB. The installation includes a docker image of a local DynamoDB server to use for local development. The file is called chalicedev.yaml. Have fun.

Multipass update, using config file

I’ve been quite busy at my regular job sand didn’t have the time for personal projects or blogging, sorry for that. As you might remember from earlier posts that I don’t like to develop on my machine itself, I like to create purpose build virtual machines to develop specific projects. This helps me separate requirements and conflicting libraries and versions of software which might break projects. I use Multipass as the virtualisation tool for managing virtual development machines on my Mac Studio using cloud-init for automation.

Up until now I was happy using just one configuration file for each VM as my personal projects didn’t differ that much from programming tools perspective. At my latest project I found out that they will and I needed an option to create different configurations per VM. So I’ve added a command line option to make the configuration of the VM flexible. It’s the YAML file that cloud-init uses to install all the required packages and set the proper configuration items.

It required me to adjust the documentation and to update the remote multipasssetup repository on BitBucket. I quickly ran into issues with Git as I had made a correction, resolved a typo, remotely without merging so my local and the remote repo where out of sync. Took some effort to getting it resolved as I’m no git expert ;-).

Connection a Psion Series 3a to my Mac

I follow James Weiner on Mastodon (@hypertalking@bitbang.social) because of his beautiful one-pixel art and his work on restoring old computers. Last week he boosted a post which mentioned Psion PDA’s which lead me down a rabbit hole which ended at Psion User Group which is maintained by Alex Brown (@thelastpsion@bitbang.social). I remembered still having a Psion Series 3a myself somewhere stowed away in a closet together with a Palm V and a Tungsten T3.

The journey got me thinking if would be possible to use the Psion, which still looks like a great device, in a current setup and have it sync data with my Mac Studio. This send me down another journey searching for accurate documentation but I found that lacking. It was mostly for Windows based computers (32 bit and not working on most 64 bit machines) and for Apple which was a bit more obscure at that time even less. If there was anything for MacOSX it was for Intel based machines and definitely not for Apple Silicon.

Toady I got the connectivity working with the excellent help of Alex Brown and Chris Farrow on the Psion User Discord server. I thought I’d write down the steps how I got it working as a reference for others who might want to follow me down this rabbit hole as well.

First problem is the physical connection, it based on sub D9 connector or more commonly known as a serial port. Your Psion series 3a came with a special cable called the 3 Link which was a serial connection interface. The problem being that current computer hardware does not have any serial or even parallel connectors that where once ubiquitous. To resolve this I had to buy an USB to Serial interface which was obtained via Amazon. You can use others but they must have the PL2303 chip takes care of the proper communication.

The problem being that current computer hardware does not have any serial or even parallel connectors that where once ubiquitous. To resolve this I had to buy an USB to Serial interface which was obtained via Amazon. You can use others but they must have the PL2303 chip takes care of the proper communication.

Next you’ll have to install the driver which was to my surprise available in the App Store PL2303 Serial. The next step is optional but I found it very useful to test if there is any connectivity between the Mac and the Psion and the cable is doing it’s thing. You’ll use a Terminal emulator to connect both devices. I’ve used SerialTools on my Mac because it’s free and available in the App Store. On the Psion you’ll need to install the Comm tool, if it not installed then you can use Psion-I, select the C: drive, and install Comms.app. (before you do, make sure you disabled the 3-Link).

Open the Comms tool on your Psion and on the Mac you open Serialtools, select the PL2303 port, set baud rate to 9600 and press connect. What you type on the Mac should appear on the Psion screen and vice versa. This proves a proper connection between your Psion Series 3a and your Mac.

There are several options for the next fase, installing plptools, running a windows VM to use the PsiWin program or use DosBox staging to run Mclink

I have chosen to start with the DosBox option as it was the simplest option to get started. I dabbled with the plptools option but I haven’t got it working yet. So here are my instructions on using the DosBox option.

First you need to download the Mclink program from here, unzip it in a separate directory which you will reference later.

Download the latest version of DosBox staging from their download page and install the program. When installed, first start the application and on the new Z:\> prompt type the command: config -wc to create a new config file in ~/Library/Preferences/DOSBox called dosbox-staging.conf.You’ll need to edit this to make a link to your new serial connection. Mine was located at /dev/tty.PL2303G-USBtoUART8340 check using the Terminal if your is called the same. Find the line that starts with serial1 and make it look like: serial1 = direct realport:tty.PL2303G-USBtoUART8340.

At the end of the file you can add commands that can be executed during startup. The command I added is the mounting of the directory where I extracted my copy of Mclink. mount c /directory/location/of/mclink

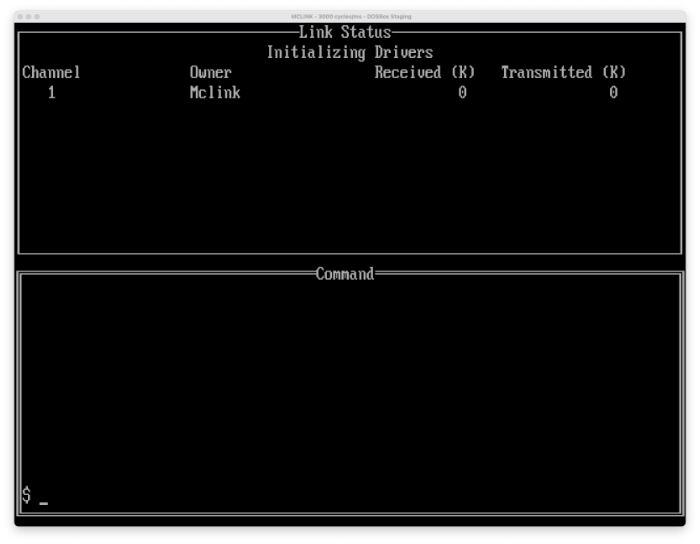

To use the new configuration file you’ll need to restart the DosBox program. After it’s restarted you can issue the command c:\mclink when the mclink program has started you should see a screen like:

Next connect your Psion Series 3a using your new USB to serial cable with the 3link cable. Start the 3-Link program by pressing Psion-L (Key combination, bottom left key and the L together) and turn it on at 19200 baud.

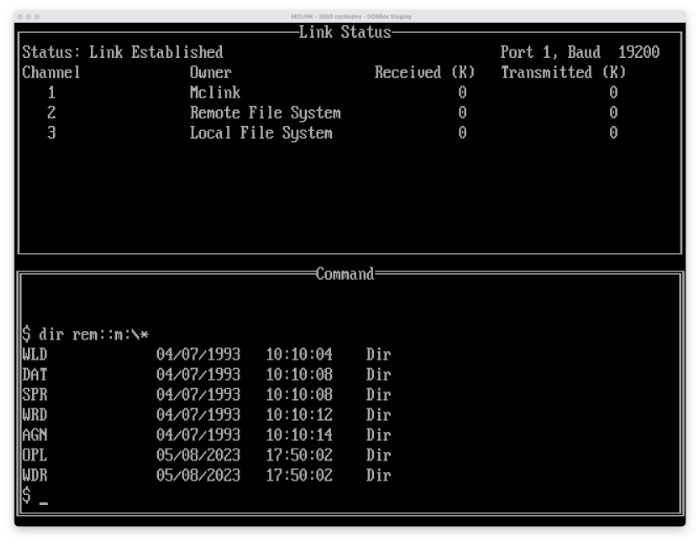

If everything is correct you’ll see mclink connecting and three lines should appear at the top of the window like:

Next connect your Psion Series 3a using your new USB to serial cable with the 3link cable. Start the 3-Link program by pressing Psion-L (Key combination, bottom left key and the L together) and turn it on at 19200 baud.

If everything is correct you’ll see mclink connecting and three lines should appear at the top of the window like:

As you can see I’ve asked for the directory contents of the ramdisk of the Psion using the command:

As you can see I’ve asked for the directory contents of the ramdisk of the Psion using the command: dir rem::m:\* for more commands on how to exchange information please locate the MCLINK.DOC file which explains all of them. Have fun!